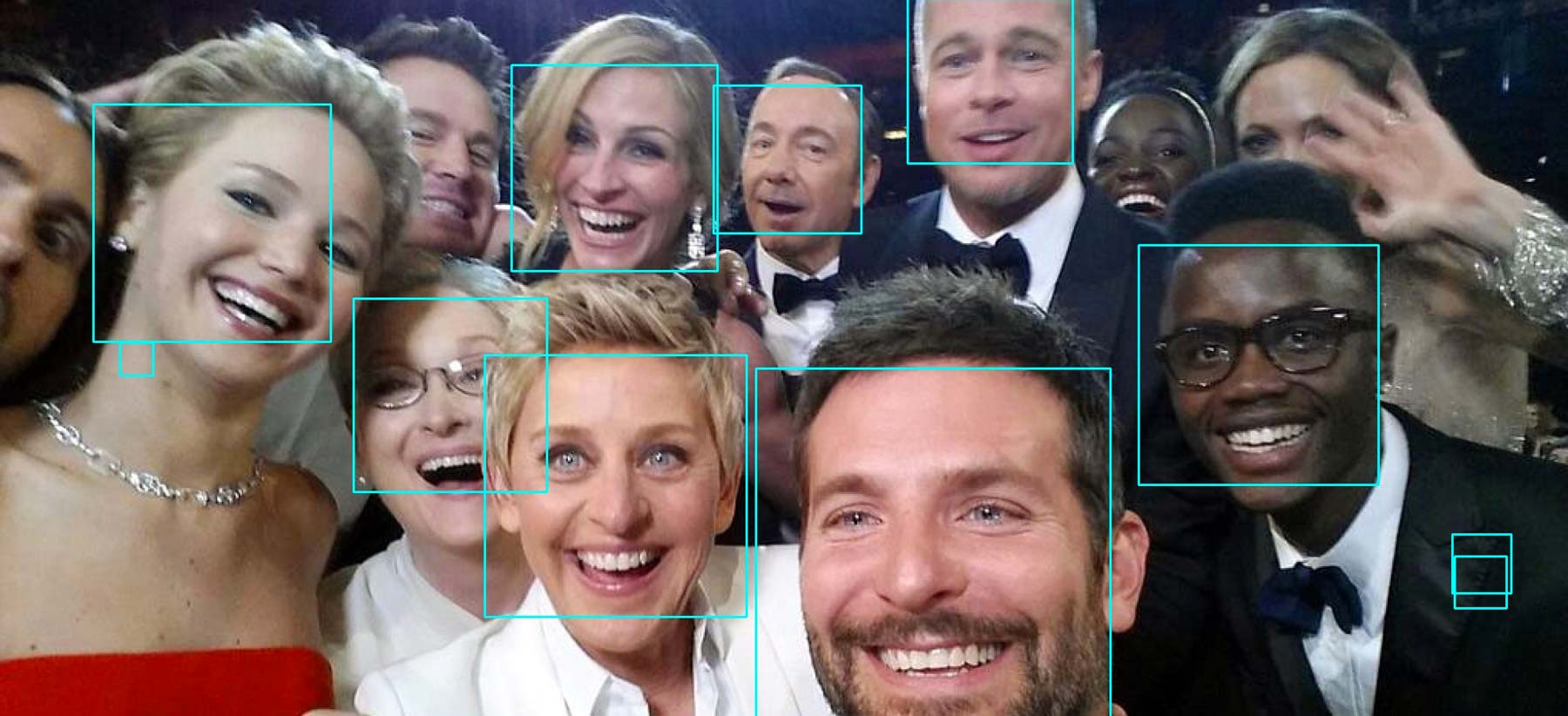

The result of running face-boxer.py on Ellen DeGeneres’s Oscar selfie.

Build face-grep in Python

This is our first foray into our overall non-existent exploration of the Python programming language and its highly-useful scientific libraries. The library we use in this exercise is the OpenCV wrapper, which contains a number of useful image processing functions, including face detection.

This exercise assumes you have little to know actual Python experience, and even less experience with Haar-cascades or the Viola-Jones object detection framework. And that’s OK; you will always not know enough about any given topic (not just in programming). What this exercise tests is your ability to take what you know and apply it to a mostly unfamiliar syntax and situation.

In this case, you will be asked to modify an existing Python script, which can be found here.

What you do know by now is how to (kind of) read the difficult Bash syntax and the Unix philosophy of how programs should behave and be designed to work with each other. The face-boxer.py script includes all the code needed to detect faces (or any other object) in a given image file. For this exercise, you will modify it so that it, just as the Unix philosophy suggests, uses text as a universal interface. Instead of having it drawing boxes around faces, you will modify face-boxer.py to print to standard output so that another program, such as ImageMagick’s crop tool, can decide what to do with it. Knowing Python would obviously make this trivial. But knowing the fundamentals of programming still gives you enough to modify the program and use it.

Deliverables

A folder named `homework/python-fun`

This will be a new folder that will, for now, contain just the face-grep.py script:

|-compciv/

|-homework/

|--python-fun/

|--face-grep.py

|--data-hold

|--classifiers

|--haarcascades/haarcascade_frontalface_default.xml

The face-grep.py script

The face-grep.py script will be a modification of the face-boxer.py script.

Given a Haar-cascade.xml file, and paths to one or more image files, face-grep.py will detect objects in each of the image files. For each detected object, face-grep.py will print to standard output the:

- name of the image file

- the x-coordinate of the top-left corner of the detected object

- the y-coordinate of the top-left corner

- the pixel width of the detected object

- the pixel height of the detected object

Example:

python face-grep.py data-hold/some-classifier.xml photo1.jpg photo2.jpg

Returns:

photo1.jpg:40,50,100,200

photo1.jpg:300,200,90,90

photo2.jpg:9,12,40,70

The face-boxer.py script

For this exercise, you will be modifying an existing Python script named face-boxer.py, which does the work of calling the appropriate OpenCV functions and configuration. For each image filename passed into face-boxer.py, it creates a new image file with blue boxes drawn around each detected image:

(obviously, the algorithm and feature data isn't quite perfect, though you can tweak the settings yourself)

What you have to do is alter face-boxer.py so that it meets the requirements for face-grep.py. In other words, it should not be creating new images, but instead, printing the dimensions of the detected objects.

The motivation for this is that generally, for other projects, drawing sky-blue boxes around faces is not very useful. However, the fact that an object may exist, and where it exists can be very helpful. Think of going through an Instagram data feed and filtering for photos that have faces (or don't have faces, if you're interested in scenic landscape, non-selfie photos). Or maybe you have hundreds of scanned documents (with hundreds of pages each) that you know has pictures of people but would rather not do all the sifting yourself. Personally, I like passing the results of this script to the ImageMagick crop command, whenever I need to produce an array of tightly-cropped portrait photos.

In other words, remember the Unix Philosophy: make your program do one thing and do it well, and have it output something useful to other programs. If you don't know Python, the only way you can do this assignment is by thinking about exactly what you need to do, and figuring out what small changes you have to make.

Trying out face-boxer.py

The full code for face-boxer.py and instructions on how to use it can be found at this Github Gist: https://gist.github.com/dannguyen/cfa2fb49b28c82a1068f

Some basics about Python

Just as bash is used to run Bash scripts from the command line, the python program is used to run Python scripts.

To run Python interactively, i.e. line-by-line, as you normally do when you log into Bash, you run ipython. Then, at the ipython prompt, you start typing in Python commands and hit Enter to see the result. Type exit to get out of ipython and back to the Bash prompt.

The syntax of Python is similar in some ways to Bash (and most other programming languages), but there are major differences…in other words, code that you've written for Bash will not just work in Python. But the point is not to become an expert in Python, but to see if you can recognize any of the programming patterns you know, and also, to practice your ability to change code in any language and observe its effects. In other words, it's just problem solving.

Indentation

In Python, whitespace is treated much differently than in Bash. For example, Python has the concept of variables, which can be assigned and referenced like this:

# Python # Bash equivalent

somevar = 42 # somevar=42

print somevar # echo $somevar

Notice how you can be loose with the space between tokens (e.g. the variable, the equal signs) and how you don't have to use $ to reference the variable's value (and also, note print versus echo).

However, the main error you'll run into when starting out with Python is indentation. Modifying face-boxer.py won't require bungling this up, but just be warned…

Learning Python for real

If you want to get a better grasp of Python as a language (and I highly recommend it as a general-purpose programming language, as do most prominent tech companies), start off with Zed Shaw's Learn Python the Hard Way. Also, check out the free online version of the indispensible Python Cookbook

Some basics about OpenCV

The big picture concept here is that while computers don't just know what a human face looks like, they can be trained to detect a face in an image by being fed lots of images that are labeled as having a face in them. What OpenCV's CascadeClassifier function does is essentially based off a probability that the pixels of an image are arranged in a similar way to what the computer has been trained to think of as a "face".

The process by which the computer interprets and organizes object data is through feature detection, which in some ways is an abstraction of an object by pixel density/color/arrangement. Here's a helpful diagram from the OpenCV face-detection tutorial:

Here's a pretty good explanation from the OpenCV docs:

Most of you will have played the jigsaw puzzle games. You get a lot of small pieces of a images, where you need to assemble them correctly to form a big real image. The question is, how you do it? What about the projecting the same theory to a computer program so that computer can play jigsaw puzzles? If the computer can play jigsaw puzzles, why can’t we give a lot of real-life images of a good natural scenery to computer and tell it to stitch all those images to a big single image? If the computer can stitch several natural images to one, what about giving a lot of pictures of a building or any structure and tell computer to create a 3D model out of it?

Well, the questions and imaginations continue. But it all depends on the most basic question: How do you play jigsaw puzzles? How do you arrange lots of scrambled image pieces into a big single image? How can you stitch a lot of natural images to a single image?

The answer is, we are looking for specific patterns or specific features which are unique, which can be easily tracked, which can be easily compared. If we go for a definition of such a feature, we may find it difficult to express it in words, but we know what are they. If some one asks you to point out one good feature which can be compared across several images, you can point out one. That is why, even small children can simply play these games. We search for these features in an image, we find them, we find the same features in other images, we align them. That’s it. (In jigsaw puzzle, we look more into continuity of different images). All these abilities are present in us inherently.

So our one basic question expands to more in number, but becomes more specific. What are these features?. (The answer should be understandable to a computer also.)

Again, it's all just 1's and 0's to the computer.

Optional: Try it with a webcam

The face-detection algorithm is more fun with a webcam. If you're interested in seeing the face-detection code work via your own webcam, check out this RealPython tutorial. Using your own webcam means you have to have Python and OpenCV installed on your own computer – Mac users, check out this tutorial

Solution

import cv2

import os

import sys

from string import Template

# first argument is the haarcascades path

face_cascade_path = sys.argv[1]

face_cascade = cv2.CascadeClassifier(os.path.expanduser(face_cascade_path))

scale_factor = 1.1

min_neighbors = 3

min_size = (30, 30)

flags = cv2.cv.CV_HAAR_SCALE_IMAGE

for filename in sys.argv[2:]:

image_path = os.path.expanduser(filename)

image = cv2.imread(image_path)

faces = face_cascade.detectMultiScale(image, scaleFactor = scale_factor, minNeighbors = min_neighbors,

minSize = min_size, flags = flags)

for( x, y, w, h ) in faces:

print "%s:%d,%d,%d,%d" % (filename,x,y,w,h)